Originally published on LinkedIn on 2025-11-23.

It’s common for IT Administrators managing classical enterprise Virtual Machine (VM) infrastructure to overlook powerful, existing tools for automation and scale-out. While the benefits of cloud-native deployment patterns—like disposable, self-configuring infrastructure—are well-understood, many don’t realize that similar, workflow-based efficiency is achievable for their on-premises VMs. The necessary technology has been available for years.

The most effective solution for modernizing VM deployment involves adopting a two staged approach:

- Image Preparation: Creating standardized, minimal, and repeatable VM base images with packer or other tools.

- Runtime Configuration with Cloud-Init: Provisioning and customizing these images at launch time.

This combination shifts the focus from manually building and configuring each VM to maintaining a reliable, versioned deployment pipeline, making the infrastructure significantly more maintainable and scalable.

A Simple Lab Environment Link zu Überschrift

To test this I tend to use very basic and simple setup. I focus on cloud-init in the next part, the whole image preperation with packer is a different topic.

My lab setup is a tuxedo Infinitybook with KVM, qemu, libvirt and cloud-init . We will use an Almaliux Generic Cloud image as base image. Then create the needed files from scratch to get an understanding.

Tools needed on the Tuxedo OS Workstation for development environment:

sudo apt install qemu-kvm libvirt-daemon-system libvirt-clients bridge-utils virt-manager cloud-utils

virt-manager package is optional, it is a graphical interface to manage the virtual machines.

With a simple bash script the lab VM can be spawned:

#!/bin/bash

# Setting important shell options for robustness

# -e: Exit immediately if a command exits with a non-zero status.

# This helps to catch errors early in the script execution.

# -u: Treat unset variables as an error.

# This helps to prevent unexpected behavior due to typos or missing variable assignments.

# -f: Disable pathname expansion (globbing).

# This can be useful when you want to treat asterisks, question marks, etc., literally.

# -C: Prevent overwriting existing files by redirection (using >).

# To overwrite, you must use >| instead. This helps to avoid accidental data loss.

# -o pipefail: If a command in a pipeline fails, the entire pipeline's exit status is that of the failed command.

# Without this, the pipeline's exit status would be that of the last command in the pipeline.

set -eufCo pipefail

SSH_PUB_KEY=$(cat $HOME/.ssh/id_ed25519.pub)

# NOTE: Prepare lab directory

if [[ -d $HOME/lab/one1 ]]; then

cd $HOME/lab/one1

else

mkdir -p $HOME/lab/one1

cd $HOME/lab/one1

fi

# NOTE: Get Almalinux-10-GenericCloud image

if [[ -a $HOME/Downloads/AlmaLinux-10-GenericCloud-latest.x86_64.qcow2 ]]; then

: # Almalinux 9 GenericCloud image is already in Downloads, do nothing

else

curl -o ~/Downloads/AlmaLinux-10-GenericCloud-latest.x86_64.qcow2 https://repo.almalinux.org/almalinux/10/cloud/x86_64_v2/images/AlmaLinux-10-GenericCloud-latest.x86_64_v2.qcow2

fi

# NOTE: Prepare Image for VM

if [[ -a one1.qcow2 ]]; then

: # one image already created, do nothing

else

qemu-img create -f qcow2 -o backing_file=~/Downloads/AlmaLinux-10-GenericCloud-latest.x86_64.qcow2,backing_fmt=qcow2 one1.qcow2 25G

fi

if [[ -a user-data ]]; then

: # user-data exists; do nothing

else

cat >user-data <<EOF

#cloud-config

hostname: one1

fqdn: one1.qemu.internal

manage_etc_hosts: true

users:

- name: admin

ssh-authorized-keys:

- $SSH_PUB_KEY

sudo: ['ALL=(ALL) NOPASSWD:ALL']

groups: sudo

shell: /bin/bash

package_update: true

packages:

- qemu-guest-agent

- curl

runcmd:

- dnf config-manager --set-enabled crb

- dnf install -y epel-release

- curl -Lo /var/tmp/minione https://github.com/OpenNebula/minione/releases/download/v7.0.1/minione

- chmod +x /var/tmp/minione

- sudo /var/tmp/minione --yes

EOF

fi

if [[ -a meta-data ]]; then

: # meta-data exists; do nothing

else

cat >meta-data <<EOF

hostname: one1

fqdn: one1.qemu.internal

EOF

fi

if [[ -a network-config ]]; then

: # network-config exists; do nothing

else

cat >network-config <<EOF

#network-config

network:

version: 1

config:

- type: physical

name: eth0

subnets:

- type: static

address: 192.168.122.51/24

gateway: 192.168.122.1

dns_nameservers:

- 192.168.122.1

- 86.54.11.1

EOF

fi

# NOTE: Initialize VM

sudo virt-install \

--name one1-lab \

--ram 2048 \

--vcpus 2 \

--disk path=one1.qcow2,format=qcow2 \

--network network=default \

--os-variant almalinux10 \

--graphics spice \

--import \

--cloud-init user-data=user-data,meta-data=meta-data,network-config=network-config

When virt-install is started it will connected to domain ‘one1-lab’ If someone is not used to leave the console opened by virsh, the Escape character is ^] (Ctrl + ])An other possible solution is to use an ISO file and mount it when the VM is initialized.

cloud-localds one1-cloud-init.iso user-data meta-data -N network-config

sudo virt-install \

--name one1-lab \

--ram 2048 \

--vcpus 2 \

--disk path=one1.qcow2,format=qcow2 \

--network network=default \

--os-variant almalinux9 \

--graphics spice \

--import \

--cdrom one1-cloud-init.iso

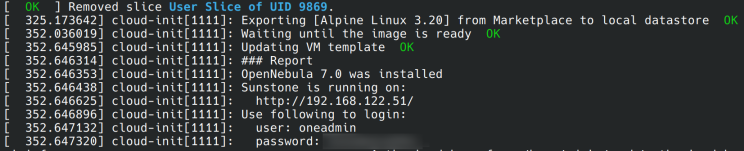

Opennebula minione installation via cloud-init within the lab

Opennebula minione installation via cloud-init within the lab

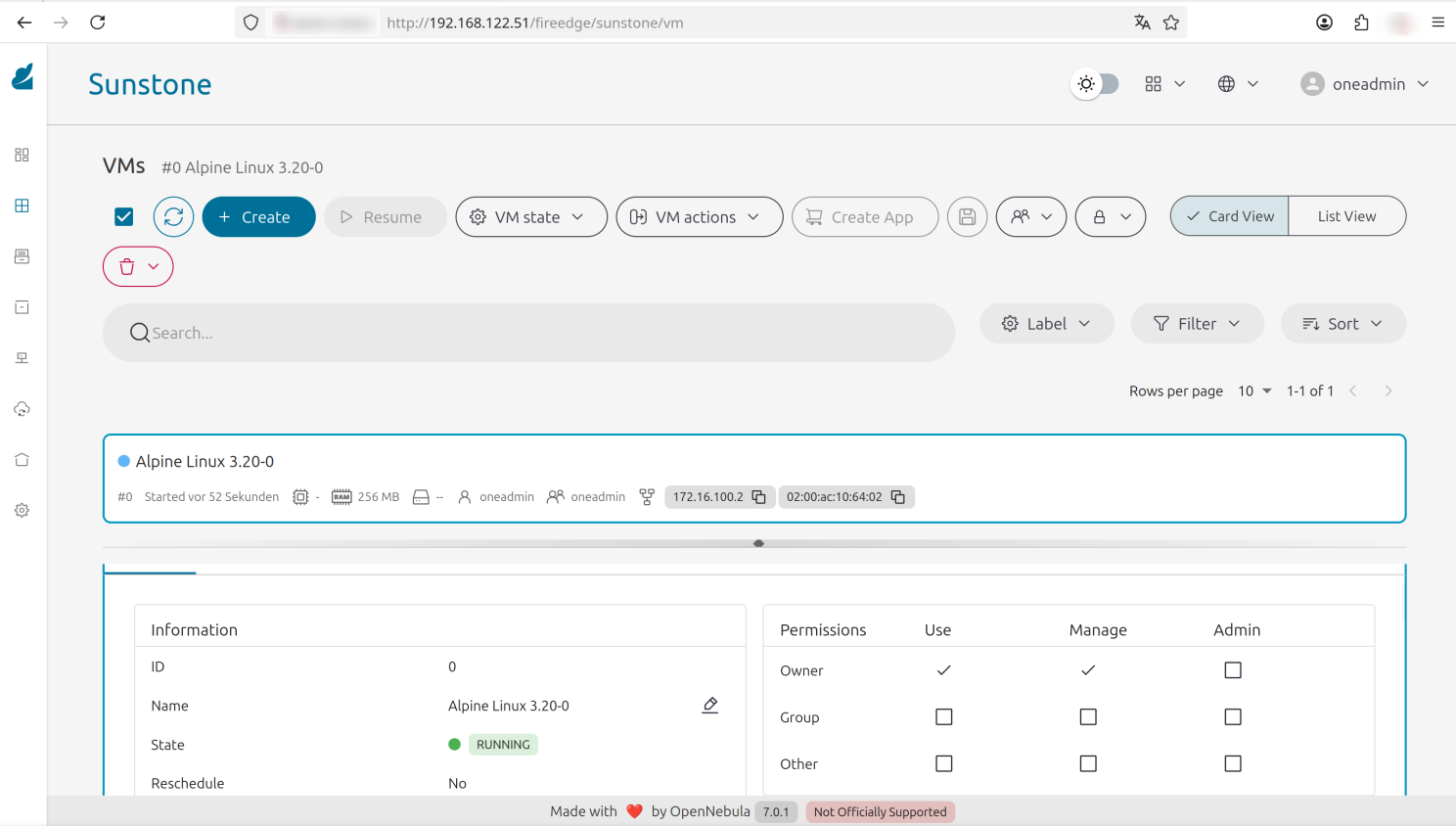

Running Alpine Linux in Nested Virtualization setup within KVM

Running Alpine Linux in Nested Virtualization setup within KVM

A login on the VM on the host system, created by cloud-init, ssh without an password can be used. Therefore the public ssh key was added via the user-data.

ssh-keygen -f ~/.ssh/known_hosts -R '192.168.122.51'

ssh 192.168.122.51 -ladmin

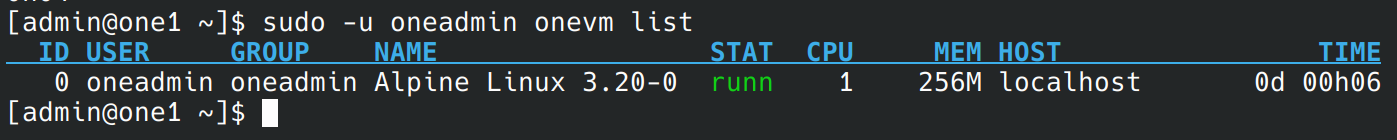

[admin@one1 ~]$ sudo -u oneadmin onevm list

check running VM via cli within VM

check running VM via cli within VM

I would not recommend to do such installations like minone with cloud-init, but it demonstrates what cloud-init can do. There is no, ansible, terraform or other tools involved. Just a bit bash, minone, which is also written in bash, and cloud-init. To scale out I would recommend to prepare the images and have packages already installed. Just finalize the configuration with cloud-init. The VM is online faster when it is already prepared and in setups where VM’s are used to scale out it will also save Bandwidth. If it is just about compute instances, which do not hold data, non-persistent images can save disk space too.

While enterprise adoption requires acknowledging further points—such as security hardening and comprehensive image lifecycle management—experimenting with Cloud-Init is the most effective way to grasp the principles behind large-scale, automated cloud deployments every Enterprise IT Admin should know. Orchestration tools like Proxmox Server Solutions or OpenNebula Systems have Cloud-Init built in since years and it is a rock solid solution.

Moving from a basic understanding to full enterprise implementation involves acknowledging critical factors like security policy enforcement, robust image maintenance, and integration with your existing orchestration tools. Nevertheless, gaining practical experience with Cloud-Init serves as a fundamental gateway to understanding the mechanisms that drive scalable, automated deployments in modern cloud infrastructure.

Get familiar with the concepts, start experimenting with User Data, and begin building your own automated images today.

Happy Home Labbing!